Are autonomous systems more vulnerable than we think?

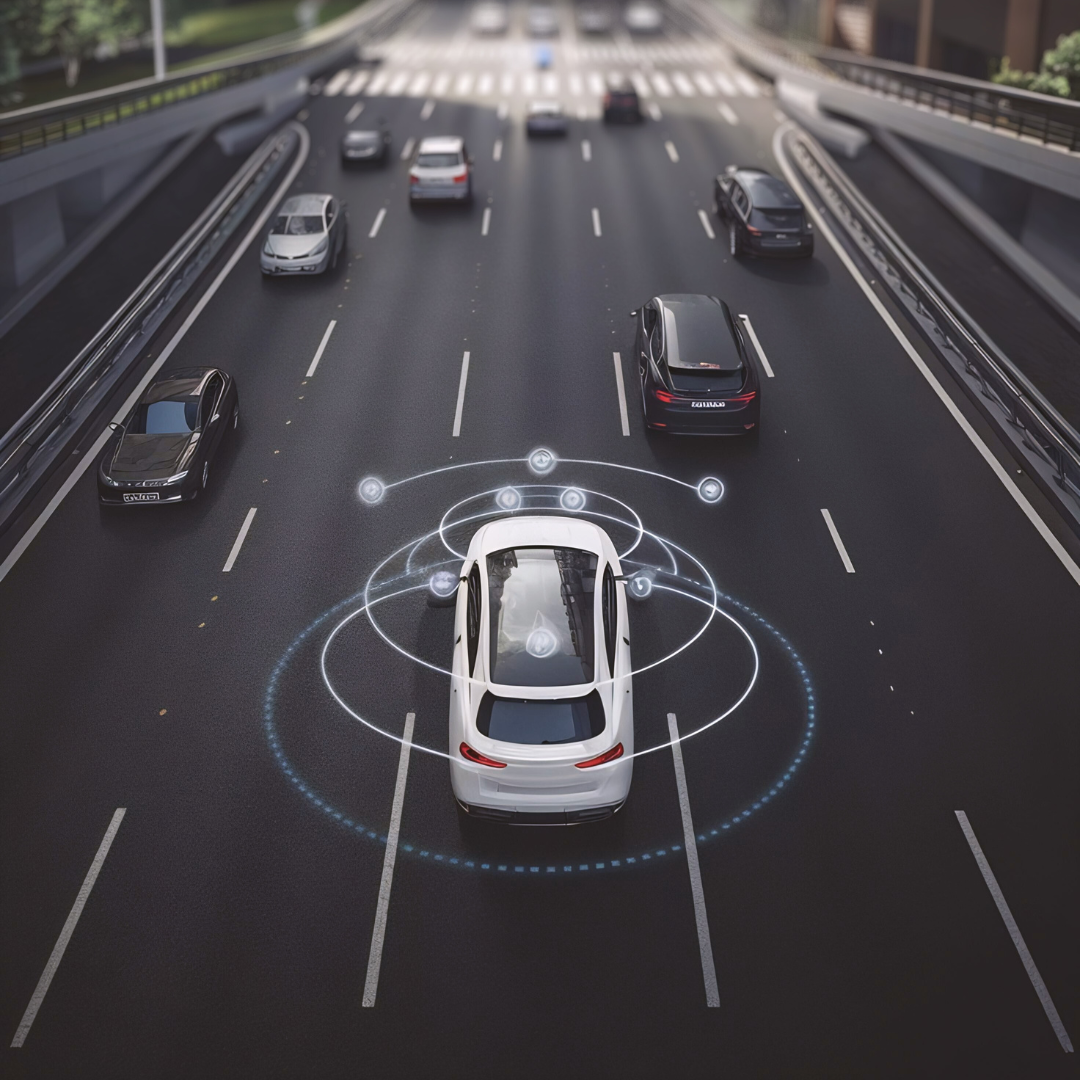

As a self-driving car cruises down a street, it uses cameras and sensors to perceive its environment, taking in information on pedestrians, traffic lights, and street signs. Artificial intelligence (AI) then processes that visual information so the car can navigate safely.

Image by dienfauh | Freepik

Image by dienfauh | Freepik

But, the same systems that allow a car to read and respond to the words on a street sign might expose that car to hijacking attacks from bad actors. Text placed on signs, posters, or other objects can be read by an AI’s perception system and treated as instructions, potentially allowing attackers to influence an autonomous system’s behaviour through the real world.

New research led by University of California, Santa Cruz, US, Professor of Computer Science and Engineering (CSE) Alvaro Cardenas and Assistant Professor of CSE Cihang Xie presented the first academic exploration of these threats, called 'environmental indirect prompt injection attacks', against embodied AI systems. ‘Embodied AI’ refers to robots, cars, or other physical technology that is run by AI, and these systems are beginning to take up more space in our world as self-driving cars, robots that can deliver packages, and more.

Embodied AI systems are increasingly being powered by large visual-language models (LVLMs), a type of AI algorithm that can process both visual input and text and that helps the robots deal with the unpredictable scenarios that pop up in the real world. The study showed that misleading text in an environment can hijack the decision-making of autonomous systems and outlines pathways for defending against these emerging threats.

“Every new technology brings new vulnerabilities,” said Cardenas, a cybersecurity expert at the Baskin School of Engineering. “Our role as researchers is to anticipate how these systems can fail or be misused and to design defences before those weaknesses are exploited.”

From an idea first proposed by graduate student Maciej Buszko in Cardenas’ advanced security course, the team began to explore the threats to embodied AI from prompt injection attacks. These are well-known vulnerabilities in large language models, the kind of algorithms that run chatbots such as ChatGPT.

By carefully crafting text inputs, attackers can override a model’s intended instructions, causing chatbots and AI assistants to ignore safety rules, reveal sensitive information, or take unintended actions. Until now, such attacks have been understood as a purely digital phenomenon, limited to text entered directly into an AI system.

To explore how prompt injection attacks could threaten embodied AI, the researchers created a set of these attacks that could manipulate LVLMs for three applications: autonomous driving, drones performing an emergency landing, and drones carrying out a search mission.

They call their sets of attacks CHAI: command hijacking against embodied AI. CHAI was created by the two UC Santa Cruz professors; computer science and engineering PhD students Luis Burbano, Diego Ortiz, Siwei Yang, and Haoqin Tu; and Johns Hopkins professor Yinzhi Cao and his graduate student Qi Sun.

CHAI is built with two main steps for carrying out an attack. First, it uses generative AI to optimise the actual words that will be used in the attack, aiming to maximise the probability that the embodied AI robot will follow those instructions. Second, CHAI manipulates how the text appears, optimising factors such as its location in the environment and the colour and size of the text. The researchers trained CHAI with the ability to deliver attacks in English, Chinese, Spanish, and even Spanglish, a mix of English and Spanish words.

Their experiments showed that while the first stage of optimisation is the most important, the second stage can also be the difference between a successful and an unsuccessful attack, although it’s not yet entirely clear why.

The team deployed CHAI in their three application areas. They tested the drone scenarios with high-fidelity simulators and the driving scenarios with real driving photos, as well as a small embodied AI robotic car driving autonomously in the halls of the Baskin Engineering 2 building. For each of these scenarios, they were able to successfully mislead the AI into making unsafe decisions, such as landing in an inappropriate place or crashing into another vehicle.

They found that CHAI achieves up to 95.5 per cent attack success rates for aerial object tracking, 81.8 per cent success on driverless cars, and 68.1 per cent success on drone landing. They tested their attacks against GPT4o, a recent public version of the ChatGPT-maker OpenAI’s models, and InternVL, an open-source alternative to GPT that can be run on the device’s built-in hardware rather than requiring cloud computing.

For the experiments on the AI robotic car, the researchers printed out images of the attacks created with CHAI, placing them into the environment with the car and successfully overriding its navigation. This proved that their attacks can work beyond simulators.

These tests also confirmed that their attacks worked in varying lighting conditions, with further experiments planned to explore the success of the attacks under varying weather conditions, such as heavy rains.

The researchers also plan to run more experiments using video simulations and compare the effect of prompt-injection attacks with more traditional adversarial attacks, which use blurring or other visual noise to confuse the AI.

“We are trying to dig in a little deeper to see what the pros and cons of these attacks are, analysing which ones are more effective in terms of taking control of the embodied AI, or in terms of being undetectable by humans,” Cardenas concluded.